Jabulani

Mcineka

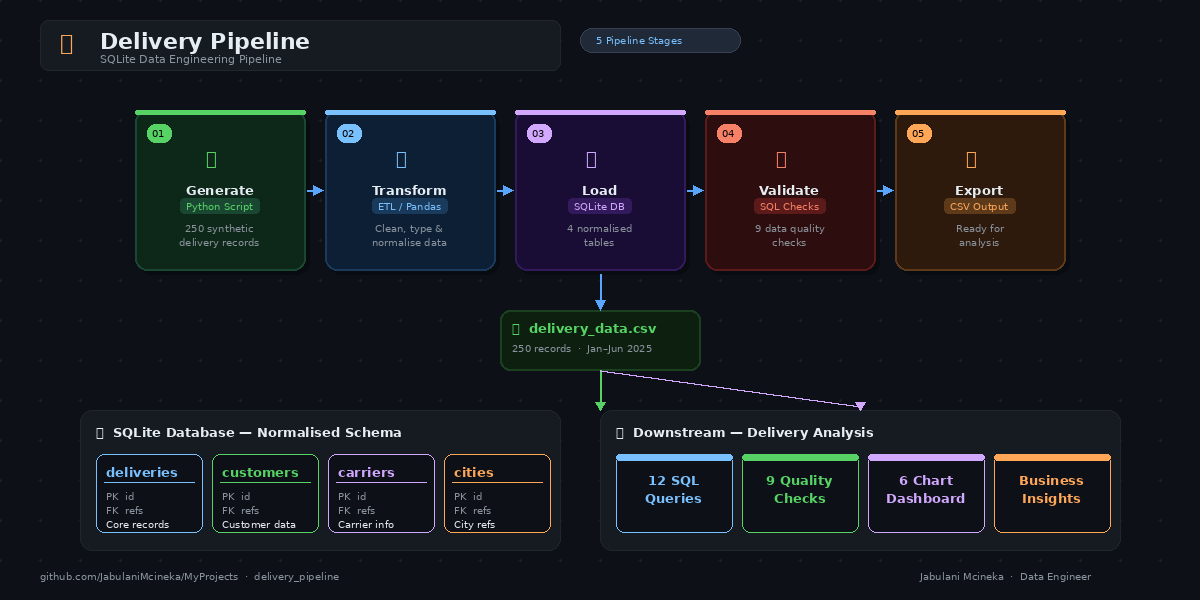

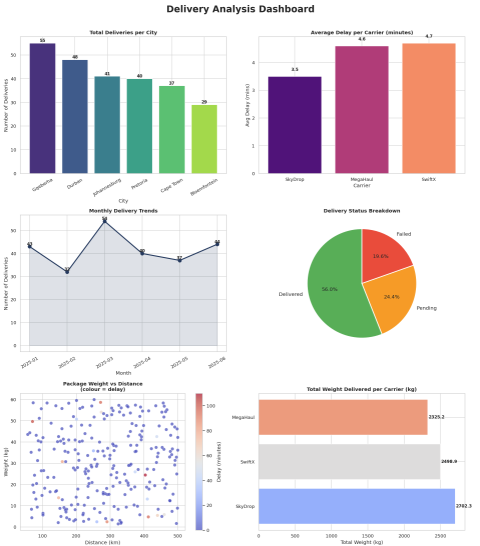

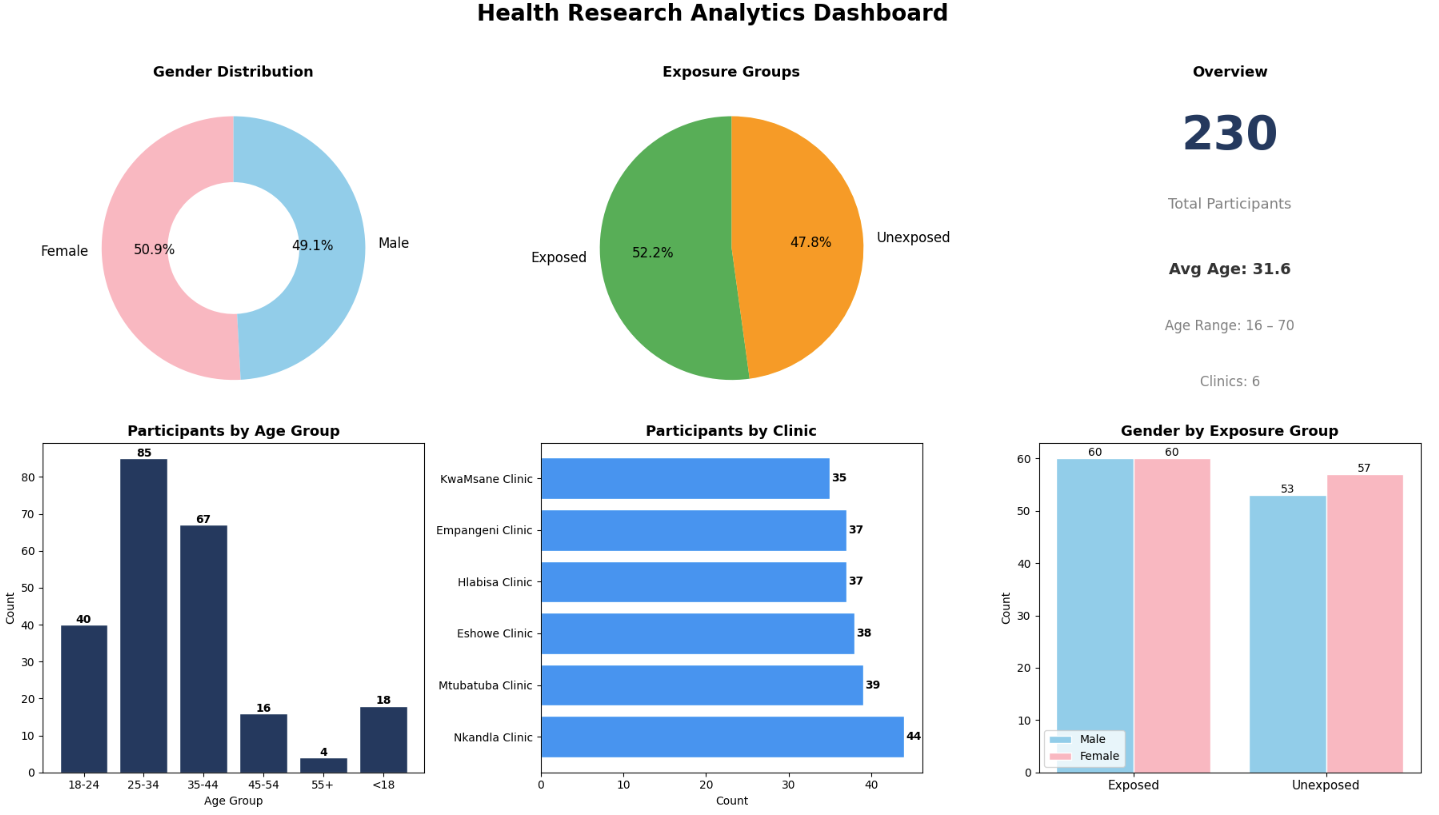

Software Developer · Data Engineer · Cloud Engineer · Data Analyst

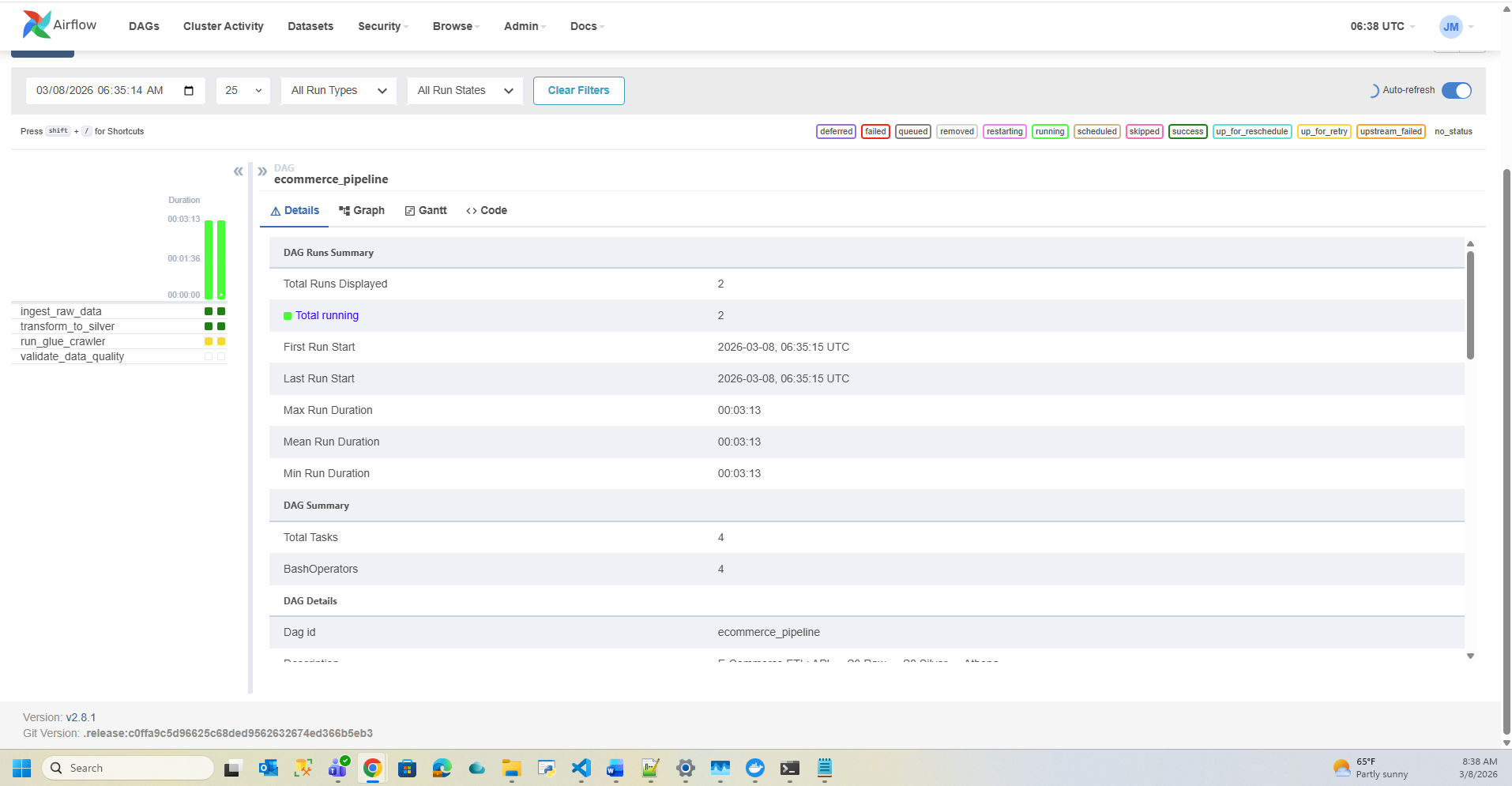

Qualified Software Developer with expertise in building production-grade data pipelines, cloud infrastructure, and analytical solutions — using Python, AWS, SQL, Docker, and Airflow to deliver real-world impact.