01

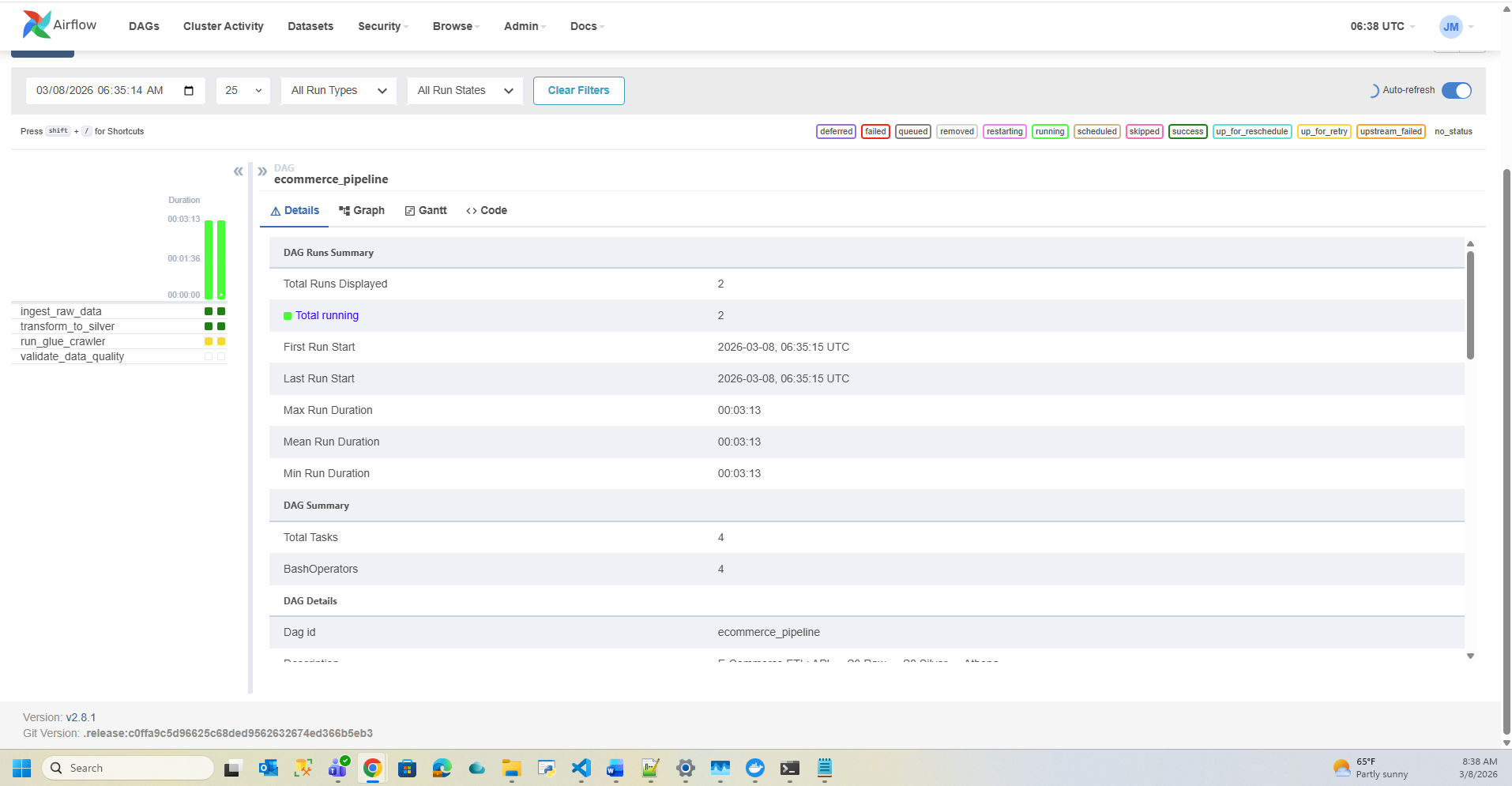

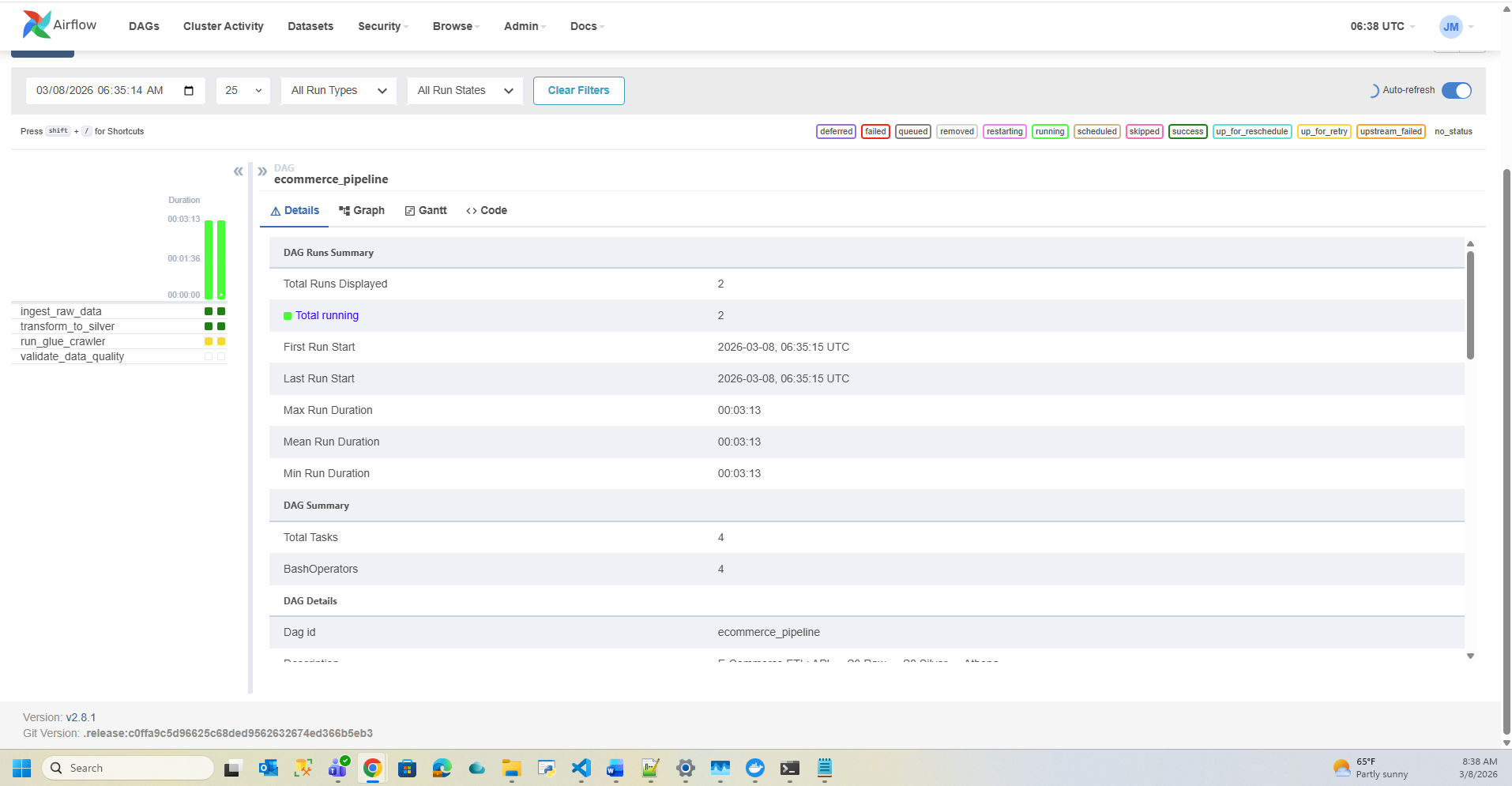

E-Commerce Real-Time Data Pipeline

Production-grade data engineering pipeline on AWS Free Tier. Ingests e-commerce data from Fake Store API, transforms JSON to Parquet via Medallion Architecture, catalogs with Glue, queries with Athena — fully orchestrated by Apache Airflow DAGs running in Docker.

AWS S3

Glue

Athena

Airflow

Docker

Python

02

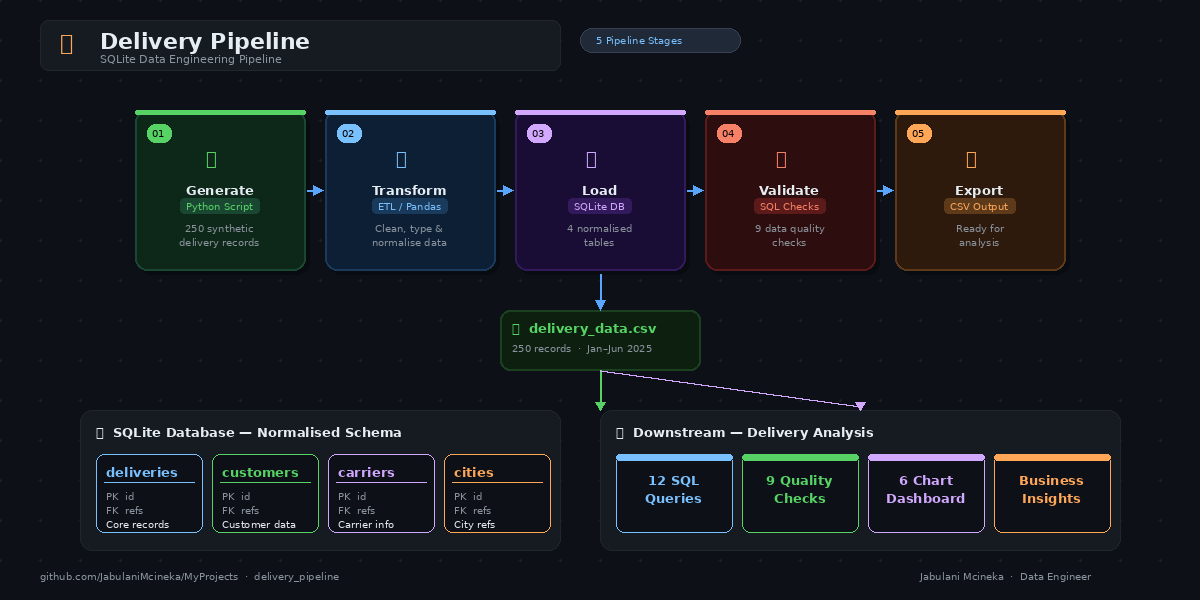

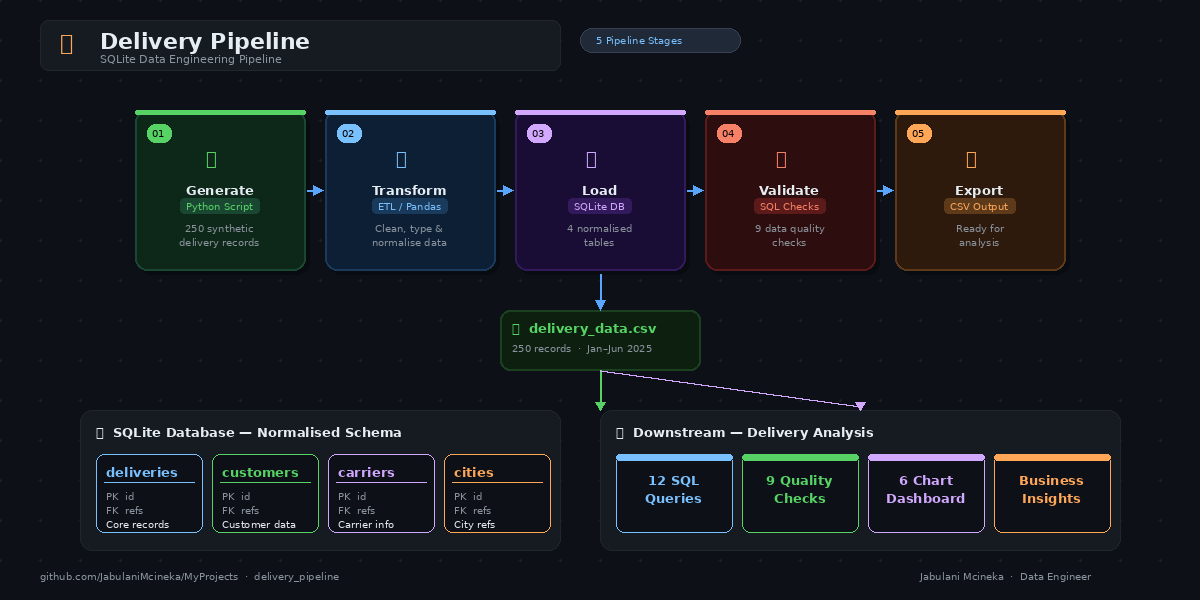

Delivery Pipeline

Full delivery data pipeline project — ingesting, processing, and analysing delivery data through an automated data engineering workflow built for real-world logistics use cases.

Python

Data Pipeline

ETL

03

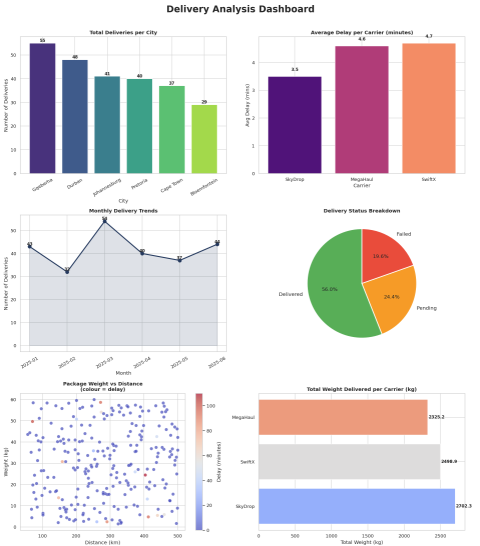

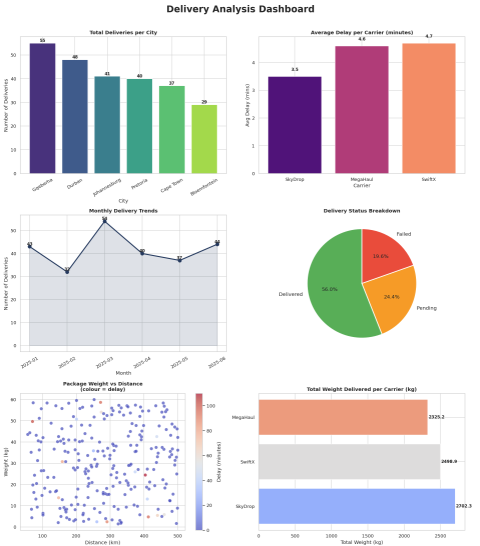

Delivery Analysis

In-depth analysis of delivery data — exploring patterns, performance metrics, and operational insights using Python and data analysis techniques to drive business decisions.

Python

Pandas

Data Analysis

04

Sales Data Warehouse

Designed and implemented a sales data warehouse — structured for efficient querying and reporting on sales performance, trends, and KPIs using modern data warehousing principles.

SQL

Data Warehouse

Python

05

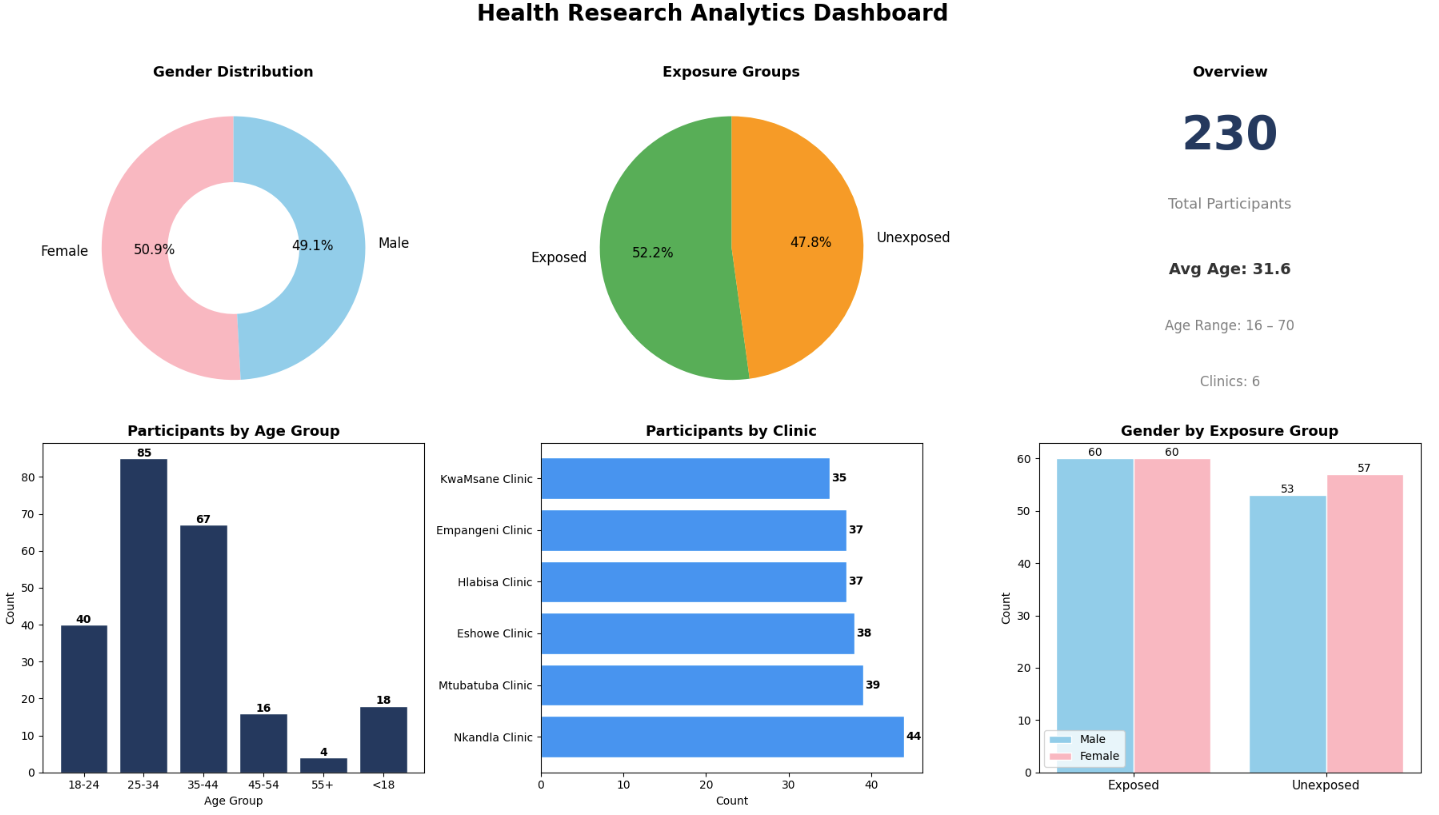

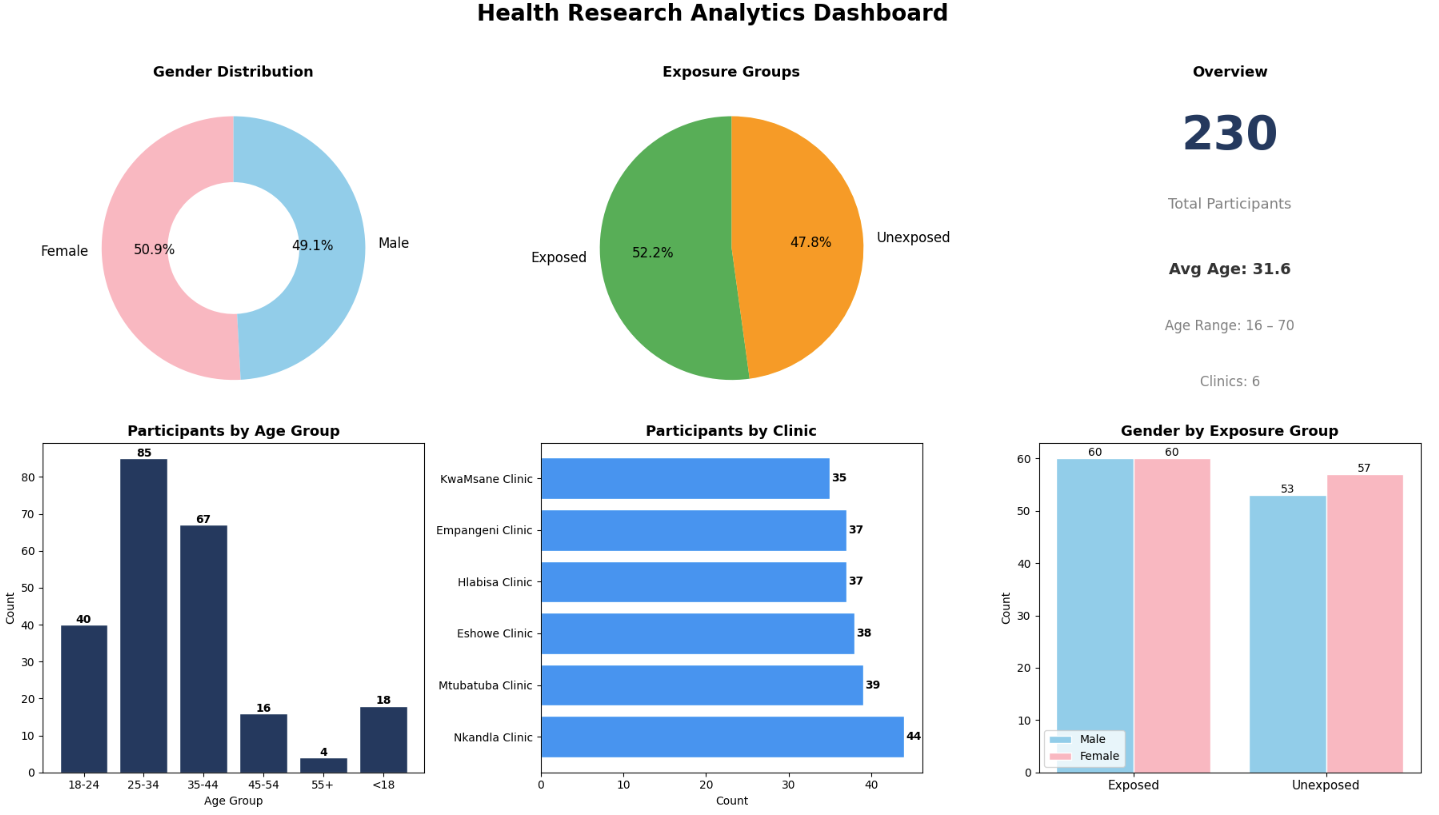

Facility Visits Analysis

Analysis of facility visit data — uncovering usage patterns, peak times, and operational insights to support data-driven facility management and resource planning decisions.

Python

Data Analysis

Pandas

06

AWS EC2 Node + Nginx Setup

Cloud infrastructure project deploying a Node.js application on AWS EC2 with Nginx as a reverse proxy — demonstrating cloud deployment, server configuration, and DevOps skills.

AWS EC2

Nginx

Node.js

Linux

In Progress

07

📘 Data Warehouse ETL Toolkit

Studying ETL design principles and data warehouse architecture patterns based on The Data Warehouse ETL Toolkit by Ralph Kimball & Joe Caserta. Focus areas include ETL design patterns, dimensional modeling foundations, and best practices for building scalable data pipelines — applied alongside Python self-study.

ETL DesignDimensional ModelingData WarehousePython

Apr 2026 – Present

In Progress

08

🐍 Python Automation & Data Projects — 100 Days of Code

Hands-on Python development focused on building automation scripts and data processing solutions through daily structured practice. Focus areas include Python fundamentals, file handling and data transformation, automation workflows, and clean and maintainable code practices. All exercises documented on GitHub.

PythonAutomationFile HandlingData Processing

Mar 2026 – Present

In Progress

09

📘 Data Engineering Self-Study — Fundamentals of Data Engineering

Self-directed learning grounded in core principles of modern data systems, translating theory into practical implementations through hands-on exercises. Focus areas include data pipeline design, ETL processes, data architecture fundamentals, and end-to-end data flow understanding. Progress documented on GitHub through small projects that bridge concept and practice.

Data PipelinesETLData ArchitecturePythonEnd-to-End Flows

Mar 2026 – Present